Introduction

Invented by Geoffrey Hinton in 1985, Restricted Boltzmann Machine which falls under the category of unsupervised learning algorithms is a network of symmetrically connected neuron-like units that make stochastic decisions. This deep learning algorithm became very popular after the Netflix Competition where RBM was used as a collaborative filtering technique to predict user ratings for movies and beat most of its competition. It is useful for regression, classification, dimensionality reduction, feature learning, topic modelling and collaborative filtering.

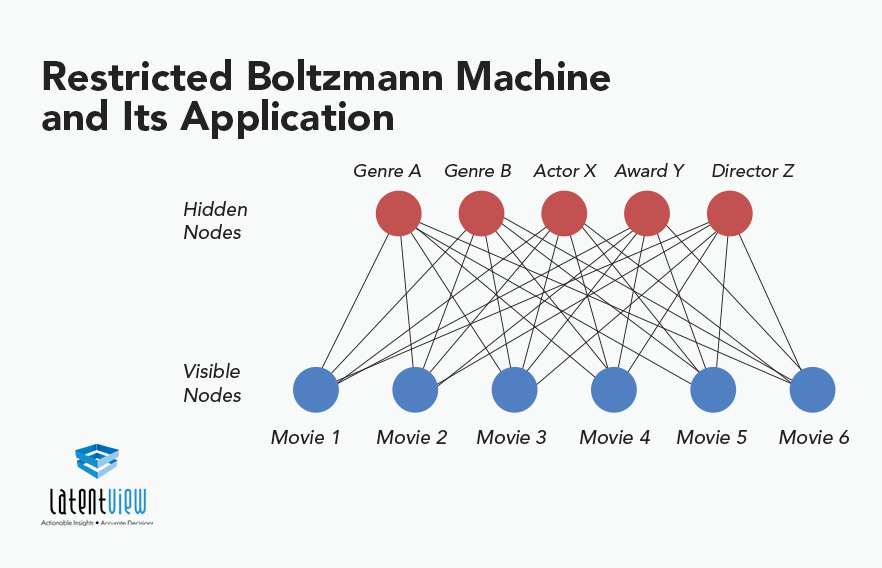

Restricted Boltzmann Machines are stochastic two layered neural networks which belong to a category of energy based models that can detect inherent patterns automatically in the data by reconstructing input. They have two layers visible and hidden. Visible layer has input nodes (nodes which receive input data) and the hidden layer is formed by nodes which extract feature information from the data and the output at the hidden layer is a weighted sum of input layers. It might seem strange but they don’t have any output nodes and they don’t have typical binary output through which patterns are learnt. The learning process happens without that capability which makes them different. We only take care of input nodes and don’t worry about hidden nodes. Once the input is provided , RBM’s automatically capture all the patterns , parameters and correlation among the data. That’s the beauty of the Restricted Boltzmann Machine.

There are many real time business use cases where RBM is used like

- Pattern recognition : RBM is used for feature extraction in pattern recognition problems where the challenge is to understand the hand written text or a random pattern.

- Recommendation Engines : RBM is widely used for collaborating filtering techniques where it is used to predict what should be recommended to the end user so that the user enjoys using a particular application or platform. For example : Movie Recommendation, Book Recommendation

- Radar Target Recognition : Here, RBM is used to detect intra pulse in Radar systems which have very low SNR and high noise.

Features of Restricted Boltzmann Machine

Some important features of Boltzmann Machine :

- They use recurrent and symmetric structure.

- RBMs in their learning process try to associate high probability with low energy states and vice-versa.

- There are no intra layer connections.

- It is an unsupervised learning algorithm ie., it makes inferences from input data without labeled responses.

Lets understand how a Restricted Boltzmann Machine is different from a Boltzmann Machine. Both the algorithms have two layers visible and hidden. In the Boltzmann Machine each neuron in the visible layer is connected to each neuron in the hidden layer as well as all neurons are connected within the layers. However RBM is a special case of Boltzmann Machine with a restriction that neurons within the layer are not connected ie., no intra-layer communication which makes them independent and easier to implement as conditional independence means that we need to calculate only marginal probability which is easier to compute. Below diagrams will help us understand the same:

Working of RBM

As mentioned earlier Restricted Boltzmann Machine is an unsupervised learning algorithm , so how does it learn without using any output data? We have a visible layer of neurons that receives input data which is multiplied by some weights and added to a bias value at the hidden layer neuron to generate output. Then the output value generated at the hidden layer neuron will become a new input which is then multiplied with the same weights and then bias of the visible layer will be added to regenerate input. This process is called reconstruction or backward pass. Then the regenerated input will be compared with the original input if it matches or not. This process will keep on happening until the regenerated input is aligned with the original input.

For Example , we have a six set of movies Avatar , Oblivion , Avengers, Gravity, Wonder Woman and Fast & Furious 7. So, movies will become visible neurons and the latent features which we are trying to learn will become hidden neurons. Below diagram shows the Restricted Boltzmann Machine formed.

Here Avatar , Oblivion and Gravity will fall under Sci-Fi movie genre and remaining will fall under thriller. So, Thriller and Sci-Fi will become hidden neurons of hidden layers which are the features extracted from our input (set of movies).

If a person has told us her set of movie preferences then our RBM can activate the hidden neurons of her preferences. So, those sets of movies will send messages to hidden neurons to update themselves for that user.

Conversely if a user likes Thriller movies then, our RBM can find movies which are turned on by the hidden neuron “Thriller” and hidden neurons will send messages to visible neurons to update their states and this will work as a movie recommendation engine.

Training of RBM

RBM is trained using Gibbs Sampling and Contrastive Divergence.

- In statistics, Gibbs sampling is a Markov chain Monte Carlo algorithm for obtaining a sequence of observations which are approximately from a specified multivariate probability distribution, when direct sampling is difficult.

If input is represented by v and hidden value by h then, p(h|v) is the prediction. Knowing the hidden values, p(v|h) is used for prediction of regenerated input values. Say this process is repeated k times and after k iterations v_k is obtained from initial input value v_0.

- Contrastive Divergence is an approximate Maximum-Likelihood learning algorithm to approximate the graphical slope representing the relationship between a network’s weights and its error, called the gradient. It is used in a situation where we can’t evaluate a function or set of probabilities directly, some form of inference model is needed to approximate the algorithm’s learning gradient and decide which direction to move towards.

In CD , weights are being updated. First gradient is calculated from reconstructed input and then delta is added to old weights to get new weights.

Advantages and Disadvantages of RBM

Advantages :

- Expressive enough to encode any distribution and computationally efficient.

- Faster than traditional Boltzmann Machine due to the restrictions in terms of connections between nodes.

- Activations of the hidden layer can be used as input to other models as useful features to improve performance

Disadvantages :

- Training is more difficult as it is difficult to calculate the Energy gradient function.

- CD-k algorithm used in RBMs is not as familiar as the back propagation algorithm.

- Weight Adjustment

Applications of RBM

- HandWritten Digit Recognition is a very common problem these days and is used in a variety of applications like criminal evidence, office computerization, check verification, and data entry applications. It also comes with challenges like different writing style, variations in shape and size as well as image noise, which leads to changes in numeral topology. In this a hybrid RBM-CNN methodology is used for digit recognition. First, features are extracted using RBM deep learning algorithms. Then extracted features are fed to the CNN deep learning algorithm for classification. RBMs are highly capable for extracting features from input data. It is designed in such a way that it can extract the discriminative features from large and complex datasets by introducing hidden units in an unsupervised manner.

- Real time intra pulse recognition of radar using RBM : Intra-pulse features extraction of radar is of high significance and it suffers from challenges like data representation capability and the robustness to cope with noise. In order to achieve better recognition performance, ambiguity function (AF) of radar signals is taken as intra-pulse characteristics. Many dimension reduction algorithms have been applied to extract key information in AF however, most traditional algorithms carry out a large amount of data and with the increase of sampling points speed drops rapidly, which is contrary to the need of modern intelligence. So, here the restricted Boltzmann machine (RBM) is adopted, a stochastic neural network, to extract features effectively. Firstly, we calculate the AF of the radar signals and then, singular value decomposition (SVD- method used for noise reduction in low) is applied on the main ridge section of the AF as a noise reduction method in low SNR. Finally, processed data are input trained RBM and acquire the recognition results.

Conclusion

To summarize, Restricted Boltzmann Machines are unsupervised two layered neural models that learn from the input distribution. There are many variations and improvements on RBM and the algorithms used for their training and optimization. They are trained using contrastive divergence and after training they can generate novel samples from the training dataset. There are also some modifications which are being made to original RBM’s in order to make them more efficient and representable like in the case of Fuzzy RBM, Infinite RBM.