MLOps

In our previous post, we discussed why you need MLOps and the challenges standing in the way. In this post, let’s talk about getting started on an MLOps journey.

According to Forrester, your AI Transformation is doomed to fail without an MLOps program!

MLOps is not a tool or a technology or even a process. It’s a capability that a company needs to develop, and it needs to be part of the company’s culture for managing the lifecycle of Machine Learning Models.

How do you get started in MLOps?

Given all the challenges outlined previously, companies need to make a concerted effort to implement an MLOps program. Based on our work with many clients in the last several years, we have distilled an approach that can help you get started on the journey:

- Put together a Team. Identify an owner for the MLOps initiative, along with members from Business, Data Science, and DevOps teams.

- Design the MLOps Architecture . Define the architecture for managing all aspects of the model life cycle, tailoring specifically to the company’s needs.

- Implement the Architecture. Evaluate various tools and software needed for the implementation. Deploy the software and create the workflows for operationalizing the steps across the ML life cycle: development, deployment, monitoring, and governance.

- Migrate Pilot Models. Migrate a few ML models to the new MLOps stack while activating specific parts of the architecture and the workflows.

- Scale-out. Migrate the remaining models and start developing new models in the new MLOps stack.

In the next sections, we will expand on some of the above points.

Create the MLOps Team

The MLOps Team consists of members from business, data science, and IT organizations. The team should be led by an enterprise architect who has deep knowledge and experience in operationalizing machine learning.

Placing the Team

In general, the model life cycle is managed by DevOps teams that are part of the IT organization. There are a couple of options for where the MLOps team can be located: either part of the enterprise architecture team under IT (in organizations where the IT team manages models from multiple BU’s), or part of the central data science and analytics team (in organizations with a mature analytics practice).

Sometimes, the MLOps team can be part of a specific business unit (such as marketing) in organizations where the business unit has special requirements to manage complex models, perhaps in collaboration with the enterprise IT or Data Science teams.

Roles and responsibilities

The team needs to have specific roles for managing data acquisition and data pipelines, preparing the data, training the model, running experiments to select the best models, deploying the model into production, monitoring post-production performance, and managing the infrastructure and workflow automation for the MLOps stack.

Design the MLOps Architecture

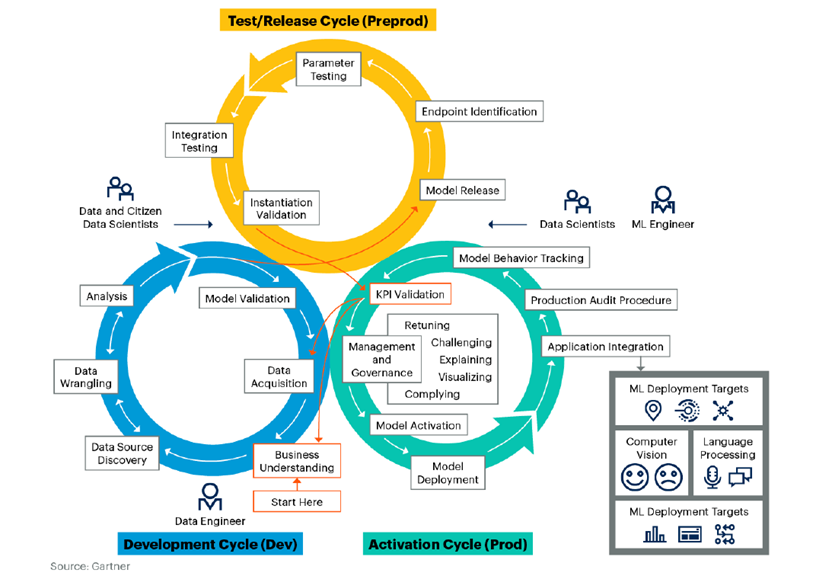

The graphic above shows the typical architecture of an MLOps system.

At a high level, an MLOps system must support the following major activities – data acquisition, analysis & modeling, deployment & integration. Not all components in each of the areas are equally important for every organization adopting MLOps.

The architecture must be guided by the following design principles such as:

- Simple to use for data scientists

- Enable data scientists to deploy models and version them rapidly

- Enables IT, team, to govern models by collecting metadata

- Ensures appropriate level of monitoring

- Supports collaboration between various teams (business/IT/data science)

- Easy to scale

- Easily interface with a variety of third-party tools for accomplishing tasks

- Effectively version and manage code, data, and models bundled together

- A fully automated approach to package, deploy, and monitor

Realizing these principles in the MLOps platform requires achieving trade-offs, and these are guided by the needs of the business.

Implement the MLOps Platform

According to Gall’s Law:

A complex system that works is invariably found to have evolved from a simple system that worked. A complex system designed from scratch never works and cannot be patched up to make it work. You have to start over with a working simple system.

So, we need to go from the current implementation of MLOps (in whatever rudimentary form) in your organization to a more sophisticated one. Based on the architecture, the MLOps team needs to establish the infrastructure and tools needed to implement the goals.

There are several families of tools – open source, commercial tools, both platforms as well as MLOps specific tools. The graphic below provides a partial snapshot of the tools available for ML Life cycle management. In a future post, we will talk about choosing the right tools for MLOps.

Once the appropriate tools are selected, the next step is to create environments for development, test, and production. The best practice is to provision dev and test environments on-demand.

Then, based on the tools selected, automated workflows need to be set up and integrated within the environments. This allows the data science teams to seamlessly move artifacts from development to testing to production while providing them feedback from the model used in the real world.

This is achieved by implementing operational workflows. For example, workflows can be defined to move the model from dev to test to start the experimentation (e.g., A/B testing). Once the experimentation is complete, and the model is approved for production, these workflows are used to move the model into a model registry and deploy in production endpoints so that the monitoring processes can begin.

Migrate Pilot Models

Once the above steps are completed, it’s time to migrate a few existing ML models into the new MLOps stack. In this step, 1-2 pilot models are chosen for migration, and a few select users are provided access.

Typically, migration involves a re-implementation of the entire model development life cycle in the new MLOps stack. Typically, as shown in the graphic below, there are three interconnected phases in the re-implementation: Development, Test/Release, and Activation.

Each of these happens in the respective environments, and the model is deployed and integrated into the downstream application in the Activation phase. Going through these phases in the new MLOps stack helps identify issues and gaps and close them before provisioning to the wider audience.

Scale-Out

In this step, the remainder of the existing models is migrated to the new ML stack. All users are provided access to the new stack to build, deploy, monitor, and govern the ML models. The new MLOps stack becomes embedded into the organization’s business processes, resulting in fresh and up-to-date models providing increasing quality of predictions and better decisions.